It’s a common challenge with remote productions: you want to bring in a guest who can contribute a lot to your content, but their feed comes in grainy and full of stutters and stops. The culprit: your guest’s less-than-stellar network conditions. Do you cut them from the program or live with the drop in quality?

With the Secure Reliable Transport (SRT) protocol, the answer is easy: count them in, and don’t worry about hurting your quality. SRT will compensate for the shortcomings of its network.

So, what is the SRT protocol, exactly? And how can you take advantage of SRT’s game-changing capabilities for streaming and remote video production? Read on for answers to these and other questions about this powerful protocol.

What is the SRT protocol?

SRT is an open-source video transport protocol developed by Haivision. SRT stands for “Secure Reliable Transport,” which reflects two of its major benefits for video and audio streaming: security and reliability.

The goal of SRT is to deliver high-quality, low-latency video streaming over unpredictable public networks, which it does by:

- Offering unparalleled control over video and audio transmission, including the ability to adjust latency, buffering, and other key parameters to suit network conditions

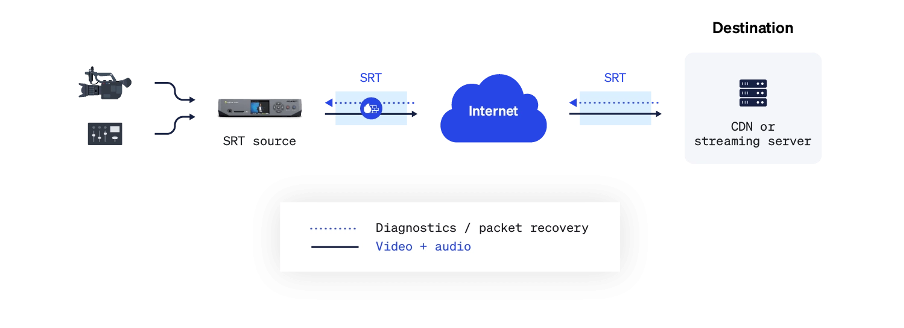

- Incorporating a unique, bi-directional User Datagram Protocol (UDP) stream that continuously sends and receives control data during streaming

- Compensating for packet loss, jitter, and other threats to quality based on UDP stream data

SRT is shaking up the realm of

Internet streaming and the broadcast world. SRT

technology can replace costly (and logistically problematic) satellite

trucks and private networks for many remote video applications. Think

reports from the field and contributions from guests in another city,

country, or continent.

Is SRT better than RTMP?

From a technological standpoint, SRT is superior to the Real-Time Messaging Protocol (RTMP). A lot of it comes down to the fact that SRT is a more modern protocol. Ready for a little history lesson?

RTMP was created in the early 2000s primarily to stream video, audio, and data from servers to Macromedia’s Flash player. When Adobe acquired Macromedia in the mid-2010s, along with RTMP, the company repurposed the protocol for broader streaming. Ultimately, RTMP served its purpose. But it’s just not cut out for many modern streaming contexts.

So, what sets SRT apart from RTMP? Built

into SRT is a unique, bi-directional UDP stream continuously sending and receiving control data during streaming. Through this, SRT can adapt

to fluctuating network conditions to minimise packet loss, jitter, and

other threats to quality. This makes the protocol viable for

sending video over the worst or unpredictable networks, such as the

public Internet.

Compare this to RTMP or RTMPS, which send source data to the target streaming server or content delivery network (CDN) without regard for any data that may get lost along the way. Often, the result is a suboptimal viewing experience for your audience at the other end.

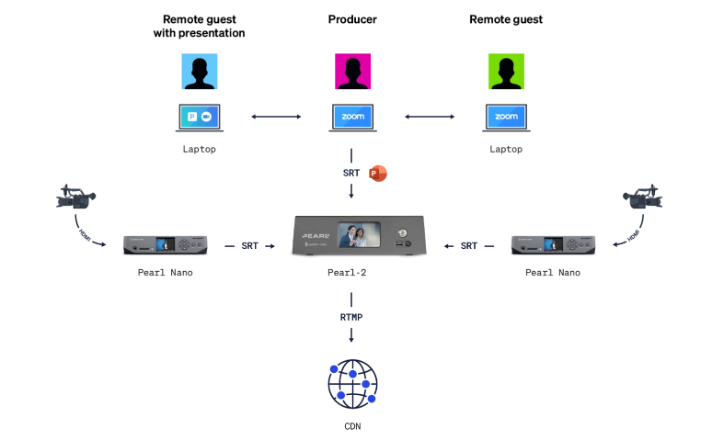

All that said, these protocols can co-exist. For example, you could use SRT to bring remote guest feeds into your production encoder but stream out your program via RTMPS, because that’s what your preferred CDN supports. In any case, your remote guest feeds will look and sound much better with SRT doing the heavy lifting on the contribution side.

Is SRT secure?

SRT is a highly secure streaming protocol. It’s what puts the “S” in “SRT,” after all.

The SRT protocol offers up to 256-bit Advanced Encryption Standard (AES) encryption, safeguarding data from contribution to distribution, and the protocol plays nice with firewalls. Multiple handshaking methods and flexible network address translation (NAT) traversal mean there’s rarely a need to ask a network admin to make policy exceptions or engage one in the first place.

Who uses SRT protocol?

Since its invention by Haivision in the early 2010s, SRT has quickly become the go-to streaming protocol for remote contribution. For example, NASA uses SRT to distribute live video across control rooms for low-latency, real-time monitoring. One of the largest demonstrations of SRT’s potential was the 2020 virtual NFL draft in the US, where producers used SRT to deliver more than 600 live feeds.

Beyond high-profile examples, countless content creators have made SRT a key part of their video production workflows. That includes Epiphan: the SRT protocol is involved in the lion’s share of live show episodes, webinars, and other live productions.

What do you need to use SRT?

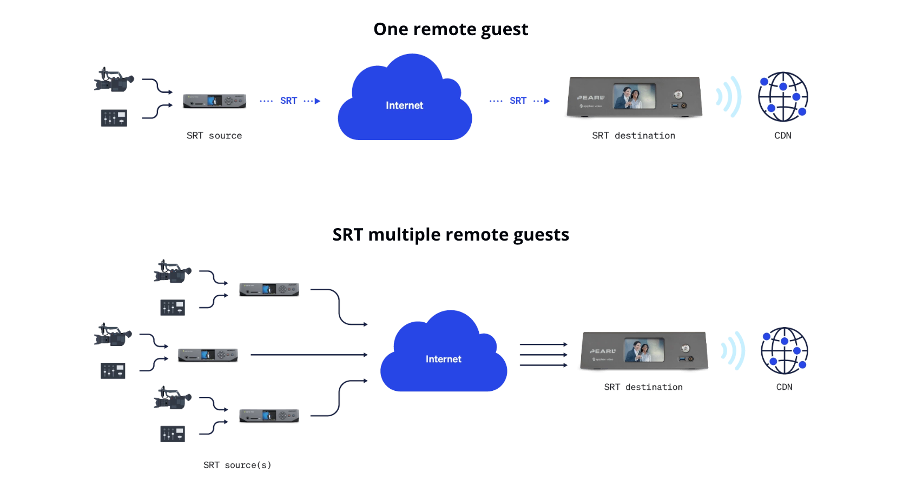

Depending on your application, you’ll need solutions that can encode and/or decode the SRT protocol. Any remote guests will need an SRT encoder to send an SRT signal over the Internet to the production encoder, which can then decode the signal and work with it for production.

Finding SRT-capable solutions

With SRT being an open-source, royalty-free technology that solves long-standing challenges, companies have been quick to adapt the protocol for use in their own products. These companies make up the SRT Alliance, whose members develop, manufacture, and operate SRT video encoders and decoders, content delivery networks, media gateways, capture cards, cloud infrastructure, and cameras.

This growing ecosystem is one of the biggest benefits of buying in. It means that, if you’re looking to use SRT yourself, there are plenty of options. For example, you could use dedicated hardware that supports this open-source protocol, like a camera or hardware encoder, or streaming software such as OBS Studio.

As well as a frequent user of SRT, Epiphan is a proud member of the SRT Alliance. They’ve brought SRT encoding and decoding to their award-winning Pearl video production systems. That includes Pearl Nano, the best SRT encoder for remote contribution.

Establishing a backchannel for real-time communication

When managing a broadcast, producers often need a way to communicate with guests and contributors in real time to ensure they’re set to go live and queue them up for their appearance. A backchannel for communication is also essential when your production involves interaction between remote participants.

Your backchannel for real-time interaction over SRT can be a separate SRT stream or a video conferencing platform. What option makes the most sense depends on the performance of the networks involved as well as the physical distance between sources and the destination (i.e., the round-trip time). With high enough network bandwidth and low enough round-trip times, it’s possible to use a parallel SRT stream as a communication backchannel. Otherwise, a video conferencing platform can do the trick.

Master SRT with award-winning solutions

Epiphan Pearl hardware encoders fully support the SRT protocol. Pearl systems feature multiple built-in inputs for video and professional audio, simplifying setup by letting you directly connect advanced video and audio gear for the highest-quality SRT streams. Plus, end-to-end control through Epiphan Cloud makes it possible to configure and test contribution encoders located anywhere in the world. This reduces the chance of errors and simplifies production for everyone involved.

* The information in this blog is extracted from Epiphan.

About Epiphan

Epiphan Video provides award-winning, purpose-built hardware solutions that help your business create impactful video content.

The Epiphan Pearl range of hardware encoders is the ultimate system for maximum versatility with multi-encoding, multi-streaming, recording, custom layouts, switching, and more. Ideal for use in live event production, enterprise communication or lecture capture in higher education.

APTECH is the authorised Australian distributor of Epiphan Video products. Every Epiphan solution from APTECH is backed by local warranty and support.